What Problem This Solves

AI-generated artifacts can fail for many reasons: incomplete requirements, hidden platform constraints, dependency ordering issues, or environment-specific behaviors. Instead of treating each failure as an isolated incident, the platform captures these failures and learns from them systematically.

How Self-Evolving AI Works

Rather than making uncontrolled changes, the system learns from real-world execution failures, proposes targeted fixes, and applies improvements only through a supervised approval workflow.

Automatic Detection

Failed runs, validation errors, and generation issues are detected automatically

AI-Assisted Analysis

System analyzes logs, task history, and error messages to identify recurring patterns

Fix Proposal

AI proposes targeted improvements to generation logic, validation rules, or dependency handling

Human Review

Proposed fixes require explicit administrative review and approval

Controlled Deploy

Approved fixes are deployed in a controlled manner

Auto Re-process

Impacted requirements reprocess automatically using the improved logic

Peek Inside the Platform

Real screenshots from our supervised, self-evolving AI system

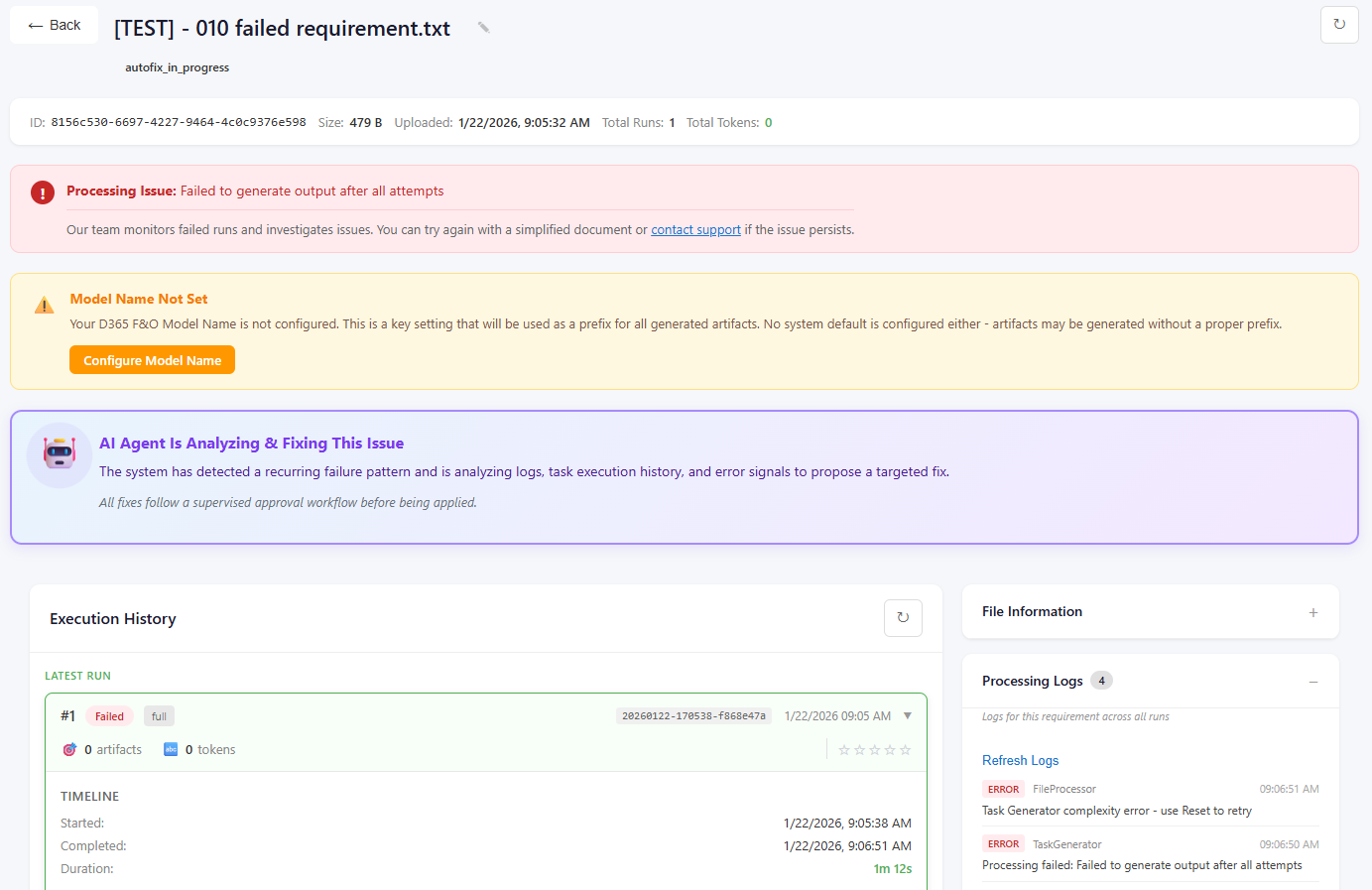

Supervised Auto-Fix Analysis

Users see real-time status as the system analyzes execution failures and identifies recurring error patterns. AI proposes targeted fixes, which are reviewed and approved before deployment. Typical analysis and resolution completes within 5–15 minutes, with full transparency throughout the process.

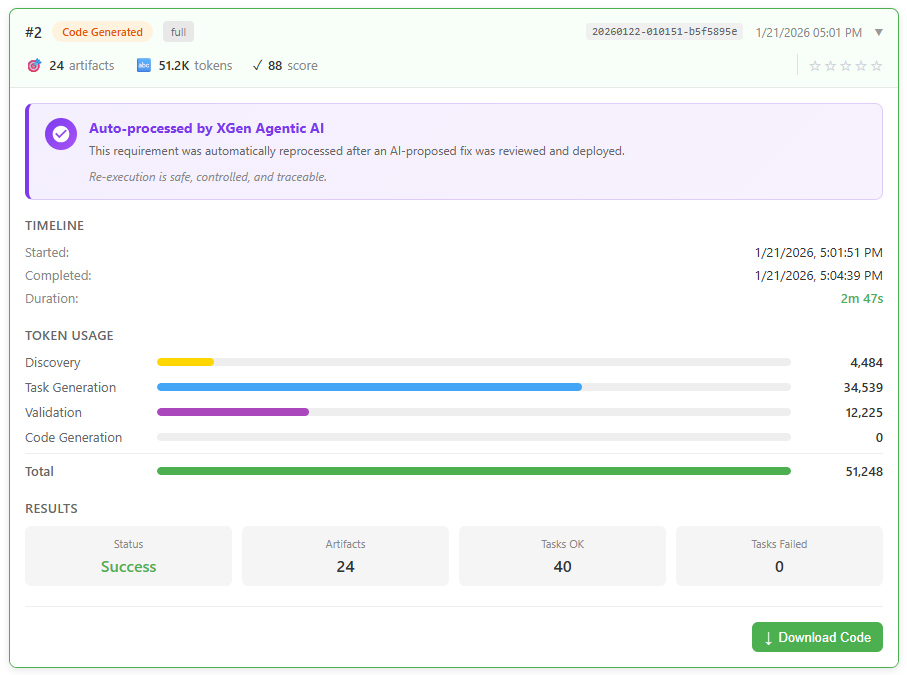

Automatic Reprocessing After Approved Fixes

Once a fix is reviewed and deployed, affected requirements are automatically reprocessed using the improved logic. In this example, 19 artifacts were regenerated in 2 minutes 30 seconds, with no manual retriggering required. All reprocessing is controlled, traceable, and based on approved changes.

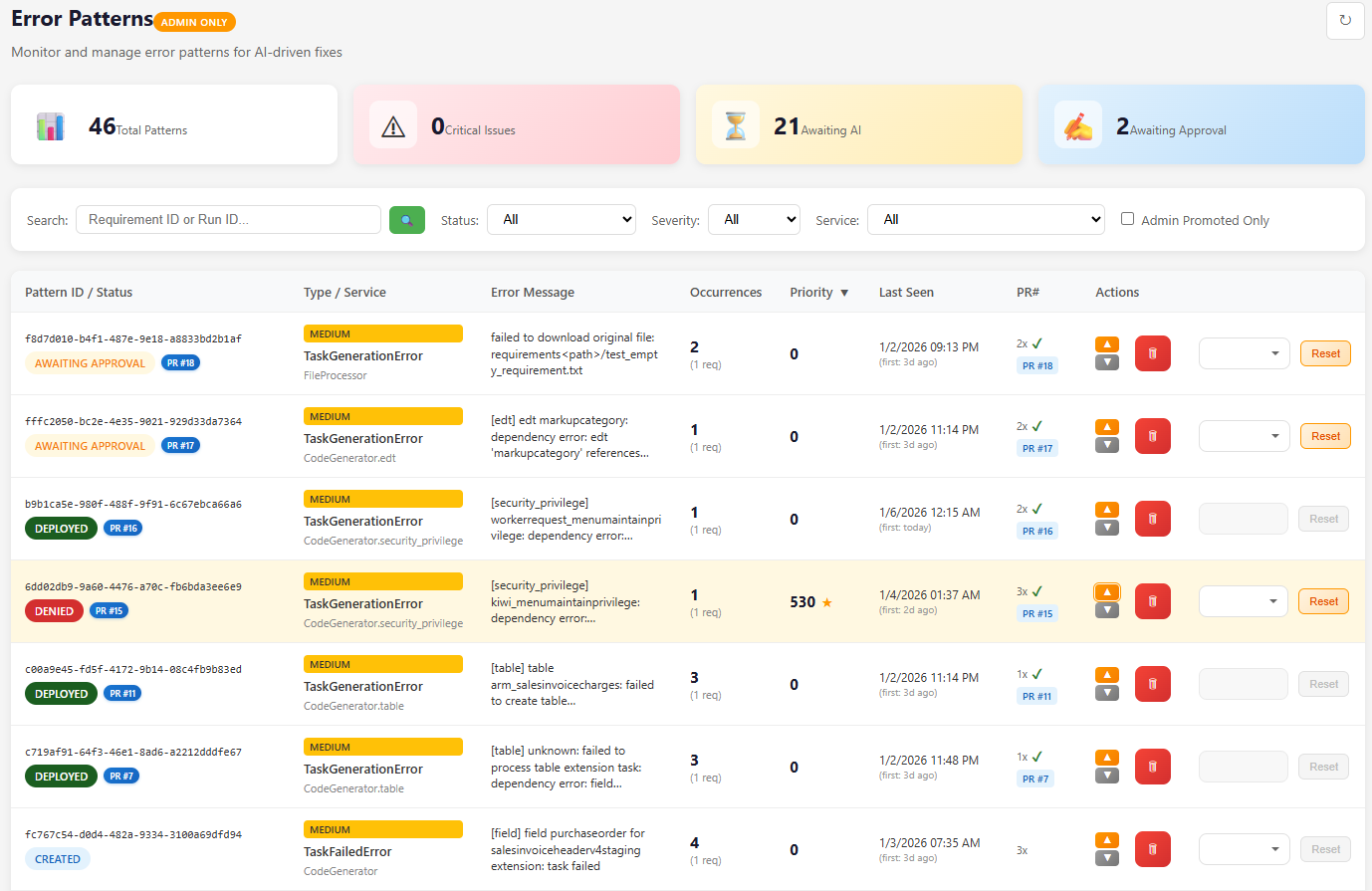

Centralized Error Pattern Intelligence

Errors are aggregated across all runs and environments, tracked by frequency, severity, and impact. The system manages the full lifecycle — from detection and analysis, to approval and controlled deployment — ensuring continuous improvement without unpredictable behavior.

Governance & Safety Model

Self-evolution does not mean uncontrolled behavior.

| Stage | Control |

|---|---|

| Detection | Automatic |

| Analysis | AI-assisted |

| Fix Proposal | AI |

| Approval | Human |

| Deployment | Controlled |

| Re-execution | Automatic |

What "Self-Evolving" Means

Understanding the scope and boundaries of this capability

It means:

- Learning from real execution failures

- Reducing repeat errors over time

- Improving generation accuracy through approved fixes

It does NOT mean:

- Unreviewed code changes

- Autonomous deployment without oversight

- Modifying customer environments directly

Why This Matters

Self-Evolving AI complements — rather than replaces — developer expertise

Fewer Repeated Failures

Errors fixed once stay fixed across all projects

Continuous Improvement

Driven by real usage, not theoretical scenarios

Higher Trust

In AI-generated artifacts through proven reliability

Reduced Manual Effort

Less troubleshooting, more productive work

24/7

Autonomous Monitoring

<15min

Average Fix Time

100%

Automatic Reprocessing

Experience Self-Evolving AI

Our Artifact Generator is powered by this self-evolving AI system. Issues that would traditionally require support tickets are identified, analyzed, and resolved through a controlled improvement process.

Try XGen CloudForgeThis capability is continuously refined as part of the platform's long-term vision, while current releases remain focused on developer-controlled, AI-assisted workflows.